An Interest In:

Web News this Week

- April 20, 2024

- April 19, 2024

- April 18, 2024

- April 17, 2024

- April 16, 2024

- April 15, 2024

- April 14, 2024

Running SSH in an Alpine Docker Container

When deploying your web application you will likely be using Docker for containerization. Many base Docker images like Node or Python are running Alpine Linux. It is a great Linux distro that is secure and extremely lightweight.

However many Docker images provide Alpine without its OpenRC init system. This causes a few issues when trying to run sidecar services with your primary process in a Docker Container.

Table of Contents

Case study

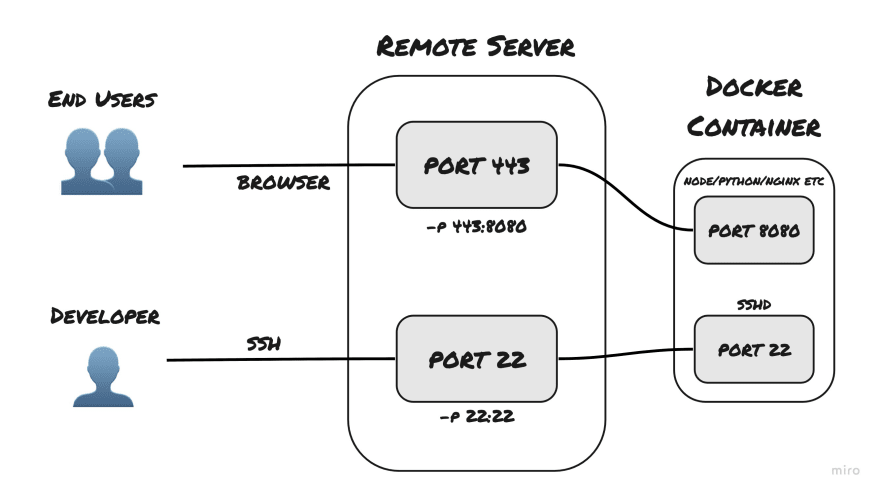

Let's imagine that we have a remote server hosted somewhere. We are using this server for deploying our production application. To run the application in an isolated environment we are continerizing a Node.js/Python/nginx or any other app. To administrate processes we would want to have direct access to production environment - docker container in this case. SSH is the way to go here, but how should we setup it?

We could SSH into the remote server and then use docker exec but that would not be a particularly secure or elegant solution. Perhaps we should forward SSH connection to the Docker container itself?

Binding ports is fairly easy - we will bind not only port 443 (or any other port you might use for your use case) but also port 22. Run command would like something like docker run -p 443:<docker_app_port> -p 22:22 <container_id>.

The more challenging part would setting up the actual SSH inside the container. We will take a simple Node.js Dockerfile as a base.

FROM node:12.22-alpine# added code goes hereWORKDIR /appCOPY . .RUN yarnRUN yarn buildCMD ["yarn", "start"]Configuring SSH

To reproduce the connection to a remote server we would be running this Docker image locally and connecting using localhost.

Configuring user

Firstly, in order to be able to login as root (or any other user) we would have to unlock the user and add authorized SSH keys (unless you would want to use text passwords to login which is very insecure).

# create user SSH configurationRUN mkdir -p /root/.ssh \ # only this user should be able to read this folder (it may contain private keys) && chmod 0700 /root/.ssh \ # unlock the user && passwd -u rootNow the root user is unlocked to be logged into and there is a folder for SSH configuration. However at the moment anyone can login as root since there is not password and password auth is enabled by default. Our goal would be to disable password auth and to add our public key as an authorized one for this user.

You may also use a different user for this purpose - just replace

/rootwith the path to the desired users home directory and replace username.

# supply your pub key via `--build-arg ssh_pub_key="$(cat ~/.ssh/id_rsa.pub)"` when running `docker build`ARG ssh_pub_keyRUN echo "$ssh_pub_key" > /root/.ssh/authorized_keysInstalling SSH

Now in order to disable password auth via SSH we have to first install SSH. I will be using OpenSSH for this but other implementations should work similarly.

RUN apk add openrc openssh \ && ssh-keygen -A \ && echo -e "PasswordAuthentication no" >> /etc/ssh/sshd_configStripped Alpine Docker images like the Node one do not provide OpenRC by default so we should install it ourselves. Then generate host SSH keys so clients may authorize our container as an SSH host. Finally, append PasswordAuthentication no to the end of sshd_config to disable password auth via SSH.

For real applications you would invest in pre-generating host keys so that keys do not update every time a new container is built. However this and managing keys more elegantly is out of scope for this post.

At this point we may start the sshd service.

RUN rc-status \ # touch softlevel because system was initialized without openrc && touch /run/openrc/softlevel \ && rc-service sshd startHowever when you run the container you would see something like kex_exchange_identification error. When docker exec into the container and execute rc-status you would see that sshd service is crashed.

Running sshd

Service is crashed because Alpine Docker images allow only a single process to be launched. It is actually a good concept that facilitates using microservices and creating docker compositions. However in this particular case there is not way to run SSH in a different container. To avoid that the actual ENTRYPOINT or CMD command should boot multiple processes.

FROM node:12.22-alpineARG ssh_pub_keyRUN mkdir -p /root/.ssh \ && chmod 0700 /root/.ssh \ && passwd -u root \ && echo "$ssh_pub_key" > /root/.ssh/authorized_keys \ && apk add openrc openssh \ && ssh-keygen -A \ && echo -e "PasswordAuthentication no" >> /etc/ssh/sshd_config \ && mkdir -p /run/openrc \ && touch /run/openrc/softlevelWORKDIR /appCOPY . .RUN yarnRUN yarn buildENTRYPOINT ["sh", "-c", "rc-status; rc-service sshd start; yarn start"]In this final Dockerfile I combined all previous RUN commands into a single one to reduce the amount of layers. Instead of running rc-status && rc-service sshd start in RUN we do that in ENTRYPOINT inside sh -c. This way Docker container will execute only a single process sh -c that would spawn childs.

As a side effect this Node.js application will not recieve SIGINT/SIGTERM signals (or any other signals from Docker) when stopping Docker container.

Run built container using docker run -p 7655:22 <container_id>. In a different terminal instance run ssh root@localhost -p 7655. Voila - you successfully SSHed into a Docker Container. Using SSH for your production app would be the same except you would be using its IP instead of localhost and a valid port.

To properly build and run container without an app around it replace

yarn startwithnodeand removeyarn/yarn builddirectives.

Troubleshooting

When any difficulties with running SSH may arise first try to docker exec into the container and check rc-status. sshd service should be started. If it has crashed or is not present you may not be starting it properly in ENTRYPOINT or have incorrect configuration in sshd_config. You may also be missing SSH host keys or use an already bound port.

To further troubleshoot you can run the container with docker run -p 7655:22 7656:10222 <container_id>. Then docker exec <container_id> and run $(which sshd) -Ddp 10222. This would create another instance of sshd that would listen on a different port (10222) with verbose logging. From there you should see informational messages on why the process might crash.

If the process does not crash attempt connecting. Run ssh root@localhost -p 7656 on the Docker host machine. From this point you will see additional logs in your previous terminal window with details on why the connection has been refused.

Wrapping up

While this tutorial is pretty specific to running SSH in an Alpine Docker container, you may reuse this knowledge to run SSH in other Linux Docker distros. Or you may have better luck configuring other sidecar services inside an Alpine Docker container. In any case I hope you found what you were looking for in this post or learnt something new.

Original Link: https://dev.to/yakovlev_alexey/running-ssh-in-an-alpine-docker-container-3lop

Dev To

An online community for sharing and discovering great ideas, having debates, and making friends

An online community for sharing and discovering great ideas, having debates, and making friendsMore About this Source Visit Dev To