An Interest In:

Web News this Week

- April 3, 2024

- April 2, 2024

- April 1, 2024

- March 31, 2024

- March 30, 2024

- March 29, 2024

- March 28, 2024

Benchmarking the AWS SDK

If you're building Serverless applications on AWS, you will likely use the AWS SDK to interact with some other service. You may be using DynamoDB to store data, or publishing events to SNS, SQS, or EventBridge.

Today the NodeJS runtime for AWS Lambda is at a bit of a crossroads. Like all available Lambda runtimes, NodeJS includes the aws-sdk in the base image for each supported version of Node.

This means Lambda users don't need to manually bundle the commonly-used dependency into their applications. This reduces the deployment package size, which is key for Lambda. Functions packaged as zip files can be a maximum of 250mb including code + layers, and container-based functions support up to 10GB image sizes.

The decision about which SDK you use and how you use it in your function seems simple at first - but it's actually a complex multidimensional engineering decision.

In the Node runtime, Node12.x, 14.x, and 16.x each contain the AWS SDK v2 packaged in the runtime. This means virtually all Lambda functions up until recently have been built to use the v2 SDK. When AWS launched the Node18.x runtime for Lambda, they packaged the AWS SDK v3 by default. Since the AWS SDK is likely the most commonly used library in Lambda, I decided to break down the cold start performance of each version across a couple of dimensions.

We'll trace the cold start and measure the time to load the SDK via the following configurations from the runtime:

- The entire v2 SDK

- One v2 SDK client

- One v3 SDK client

Then we'll use esbuild to tree-shake and minify each client, and run the tests again:

- Tree-shaken v2 SDK

- One tree-shaken v2 SDK client

- One tree-shaken v3 SDK client

Each of these tests were performed in my local AWS region, using functions configured with 1024mb of RAM. The client I selected was SNS. I ran each test 3 times and screengrabbed one. Limitations are noted at the end.

Loading the entire v2 SDK

There are a few common ways to use the v2 SDK.

In most blogs and documentation (including AWS's own, but not always), you'll find something like this:

const AWS = require('aws-sdk');const snsClient = new AWS.SNS({});// ... some handler codeAlthough functional, this code is suboptimal as it loads the entire AWS SDK into memory. Let's take a look at that flamegraph for the cold start trace:

In this case we can see that this function loaded the entire aws-sdk in 324ms. Check out all of this extra stuff that we're loading!

Here we see that we're loading not only SNS, but also a smattering of every other client in the /clients directory, like DynamoDB, S3, Kinesis, Sagemaker, and so many small files that I don't even trace them in this cold start trace:

First run: 324msSecond run: 344msThird run: 343msLoading one v2 SDK client

Let's consider a more efficient approach. We can instead simply pluck the SNS client (or whichever client you please) from the library itself:

const SNS = require('aws-sdk/clients/sns');const snsClient = new SNS({});This should save us a fair amount of time, check out the flame graph:

This is much nicer, 110ms. Since we're not loading clients we won't use,that saves us around 238 milliseconds!

First run: 110msSecond run: 104msThird Run: 109msAWS SDK v3

The v3 SDK is entirely client-based, so we have to specify the SNS client specifically. Here's what that looks like in code:

const { SNSClient, PublishBatchCommand } = require("@aws-sdk/client-sns");const snsClient = new SNSClient({})This results in a pretty deep cold start trace:

We can see that the SNS client in v3 loaded in 250ms.

The Simple Token Service (STS) contributed 84ms of this time:

First run: 250msSecond run: 259msThird run: 236msBundled JS benchmarks

The other option I want to highlight is packaging the project using something like Webpack or esbuild. JS Bundlers transpile all of your separate files and classes (along with all node_modules) into one single file, a practice originally developed to reduce package size for frontend applications. This helps improve the cold start time in Lambda, as unimported files can be pruned and the entire handler becomes one file.

AWS SDK v2 - minified in its entirety

Now we'll load the entire AWS SDK v2 again, this time using esbuild to transpile the handler and SDK v2:

const AWS = require('aws-sdk');const snsClient = new AWS.SNS({});// ... some handler code}And here's the cold start trace:

You'll note that now we only have one span tracing the handler (as the entire SDK is now included in the same output file) - but the interesting thing is that the load time is almost 600ms!

First run: 597msSecond run: 570msThird run: 621msWhy is this so much slower than the non-bundled version?

Handler-contributed cold start duration is primarily driven by syscalls used by the runtime (NodeJS) to open files; eg fs.readSync. The handler file is now 7.5mb uncompressed, and Node has to load it entirely.

Additionally I suspect that AWS can separately cache the built-in sdk with better locality (on each worker node) than your individual handler package, which must be fetched after a Lambda Worker is assigned to run your function.

Minified v2 SDK - loading only the SNS client

Once again we're importing only the SNS client, but this time we've minified it, so the code is the same:

const SNS = require('aws-sdk/clients/sns');const snsClient = new SNS({});You can see in the cold start trace that the SDK is no longer being loaded from the runtime, rather it's all part of the handler:

63ms is much better than the entire minified SDK from the previous test. Here are all three runs:

First run: 63msSecond run: 71msThird run: 67msMinified v3 SDK

Next, let's look at a minified project using the SNS client from the v3 SDK:

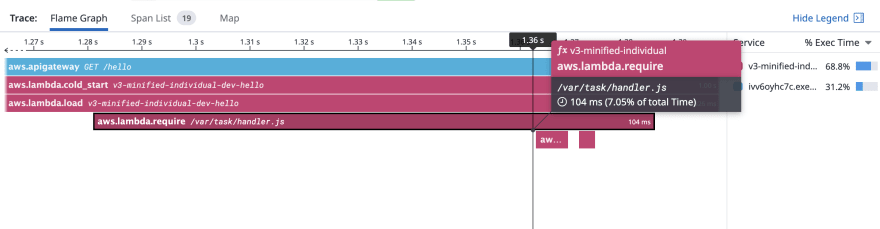

const { SNSClient, PublishBatchCommand } = require("@aws-sdk/client-sns");const snsClient = new SNSClient({})Here's the flamegraph:

Far better now, 104ms. After repeating this test a few times, I saw that 104ms tended towards the high end and measured some as low as 83ms. No surprise that this will vary a bit (see the caveats), but I thought it was interesting that we got around the same performance as the minified v2 sns client code.

First run: 104ms Second run: 83msThird run: 110msI also find it fun to see the core modules, which are provided by Node Core, are also traced:

Scoring

Here's the list of fastest to slowest packaging strategies for the AWS SDK:

| Config | Result |

|---|---|

| esbuild + individual v2 SNS client | 63ms |

| esbuild + individual v3 SNS client | 83ms |

| v2 SNS client from the runtime | 104ms |

| v3 SNS client from the runtime | 250ms |

| Entire v2 client from the runtime | 324ms |

| esbuild + entire v2 SDK | 570ms |

Caveats, etc

Measuring the cold start time of a Lambda function and drawing concrete conclusions at the millisecond level is a bit of a perilous task. Deep below a running Lambda function lives an actual server whose load factor is totally unknowable to us as users. There could be an issue with noisy reighbors, where other Lambda functions are stealing too many resources. The host could have failing hardware, older components, etc. It could be networked via an overtaxed switch or subnet, or simply have a bug somewhere in the millions of lines of code needed to run a Lambda function.

Takeaways

Most importantly, users should know how long their function takes to initialize and understand specifically which modules are contributing to that duration.

As the old adage goes:

You can't fix what you can't measure.

Based on this experiment, I can offer a few key takeaways:

- Load only the code you need. Consider adding a lint rule to disallow loading the entire v2 sdk.

- Small, focused Lambda functions will experience less painful cold starts. If your application has a number of dependencies, consider breaking it up across several functions.

- Bundling can be worthwhile, but may not always make sense.

For me, this means using the runtime-bundled SDK and import clients directly. For you, that might be different.

As far as the Node18.x runtime and v3 SDK, AWS has already said they're aware of this issue and working on it. I'll happily re-run this test when there's a notable change in the performance.

Keep in mind, the AWS SDK is only one dependency! Most applications have several, or even dozens of dependencies in each function. Optimizing the AWS SDK may not have a large impact on your service, which brings me to my final point:

Try this on your own functions

I traced these functions using a feature I built for Datadog called Cold Start Tracing, it's available now for Python and Node, and I'd encourage you to try this yourself with your own functions.

Wrapping up

Original Link: https://dev.to/aws-builders/benchmarking-the-aws-sdk-2pd4

Dev To

An online community for sharing and discovering great ideas, having debates, and making friends

An online community for sharing and discovering great ideas, having debates, and making friendsMore About this Source Visit Dev To