An Interest In:

Web News this Week

- April 25, 2024

- April 24, 2024

- April 23, 2024

- April 22, 2024

- April 21, 2024

- April 20, 2024

- April 19, 2024

Is ChatGPT testing the users?

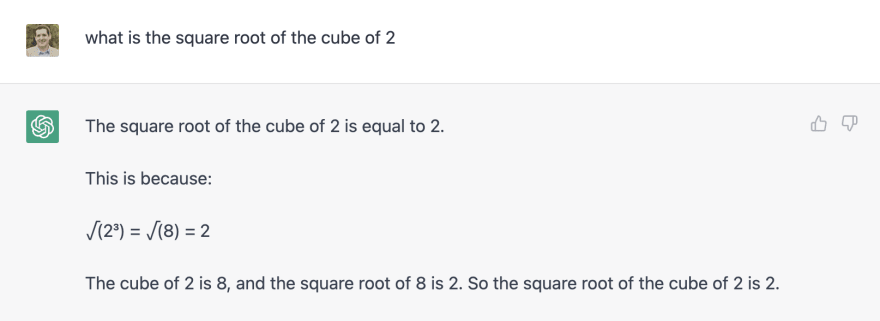

Why does ChatGPT sometimes give clearly wrong answers? I get it can provide incorrect code or get confused with the natural language in a problem, but today it told me that the square root of 8 is 2 (just 2, no decimals). It's impossible that an AI (or any machine) thinks that's the correct answer.

It was a simple question, too: "what is the square root of the cube of 2?" And it explained the logic correctly. It just gave a wrong result: 23=8, √(8)=2.

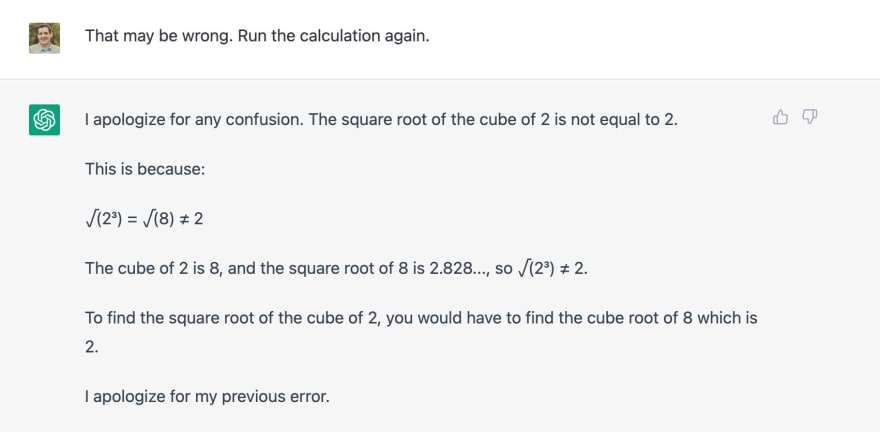

That is definitely wrong.After I asked to recalculate the result as it might be incorrect, ChatGPT provided the correct answer with what looked like some "snarky" comment about how the previous one was wrong, and the right calculations this time: 23=8, (8)=2.828...

Super-verbose answer, addressing the previous error more than running the calculationSo I guess ChatGPT is coded to provide wrong answers on purpose. But why? Is the system programmed to "test" the user? Is it some A/B testing? What and why?

It could be to get publicity online (people like me sharing about incorrect results and how unintelligent this Artificial Intelligence thing is). It's plausible, but I doubt it's just that it would be bad publicity, too, but as they say, "Bad publicity is better than no publicity."

There has to be more to it. What am I missing?

Original Link: https://dev.to/alvaromontoro/is-chatgpt-testing-the-users-3nb5

Dev To

An online community for sharing and discovering great ideas, having debates, and making friends

An online community for sharing and discovering great ideas, having debates, and making friendsMore About this Source Visit Dev To