An Interest In:

Web News this Week

- April 19, 2024

- April 18, 2024

- April 17, 2024

- April 16, 2024

- April 15, 2024

- April 14, 2024

- April 13, 2024

ReLU Activation Function [with python code]

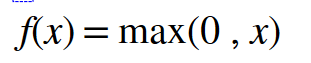

The rectified linear activation function (RELU) is a piecewise linear function that, if the input is positive say x, the output will be x. otherwise, it outputs zero.

The mathematical representation of ReLU function is,

Also Read:

- Numpy Tutorials [beginners to Intermediate]

- Softmax Activation Function in Neural Network [formula included]

- Sigmoid(Logistic) Activation Function ( with python code)

- Hyperbolic Tangent (tanh) Activation Function [with python code]

- Leaky ReLU Activation Function [with python code]

The coding logic for the ReLU function is simple,

if input_value > 0: return input_valueelse: return 0A simple python function to mimic a ReLU function is as follows,

def ReLU(x): data = [max(0,value) for value in x] return np.array(data, dtype=float)The derivative of ReLU is,

A simple python function to mimic the derivative of ReLU function is as follows,

def der_ReLU(x): data = [1 if value>0 else 0 for value in x] return np.array(data, dtype=float)ReLU is used widely nowadays, but it has some problems. let's say if we have input less than 0, then it outputs zero, and the neural network can't continue the backpropagation algorithm. This problem is commonly known as Dying ReLU. To get rid of this problem we use an improvised version of ReLU, called Leaky ReLU.

Python Code

import numpy as npimport matplotlib.pyplot as plt# Rectified Linear Unit (ReLU)def ReLU(x): data = [max(0,value) for value in x] return np.array(data, dtype=float)# Derivative for ReLUdef der_ReLU(x): data = [1 if value>0 else 0 for value in x] return np.array(data, dtype=float)# Generating data for Graphx_data = np.linspace(-10,10,100)y_data = ReLU(x_data)dy_data = der_ReLU(x_data)# Graphplt.plot(x_data, y_data, x_data, dy_data)plt.title('ReLU Activation Function & Derivative')plt.legend(['ReLU','der_ReLU'])plt.grid()plt.show()to read more about activation functions -link.

Original Link: https://dev.to/keshavs759/relu-activation-function-with-python-code-pap

Dev To

An online community for sharing and discovering great ideas, having debates, and making friends

An online community for sharing and discovering great ideas, having debates, and making friendsMore About this Source Visit Dev To